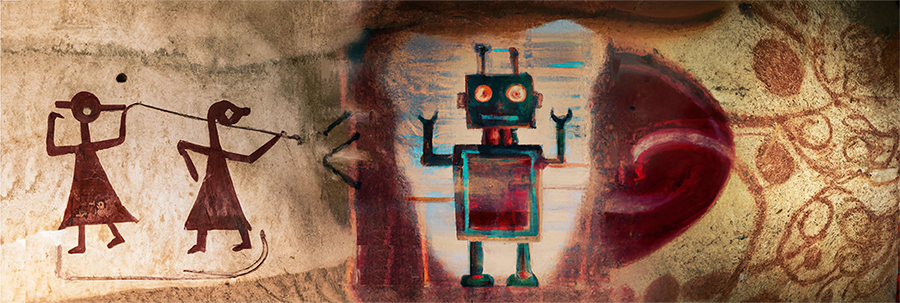

In an underground burial temple located within the EU’s southernmost State, one can find a primitive drawing of a spiralling never-ending red tree.

Archaeologists opine that the millennia-old Saflieni Hypogeum’s ‘Tree of Life’ was painted to give meaning to death. There is no evidence that the Maltese prehistoric biosphere sustained red spiralling trees. If archaeologists did not inform us about this pictorial human bias, one might have assumed that never-ending trees did exist in Malta.

Humans understand that trees are not never-ending and the red artefact is merely an imaginary depiction. On the other hand, Artificial Intelligence (“AI”) knows that Malta’s never-ending red tree is an artefact only because someone programmed the AI system to do so. In its raw form, if an AI system is only given photos of never-ending red trees, the AI system would not know that trees are usually green and finite. If no one amends the AI system to classify the red-tree as art, the AI system could potentially flag normal green trees as not being real trees. If that were to happen, a human-biased input would have impacted the AI output and multiplied the initial human bias.

Whilst this example might sound exaggerated, the ‘Proposal for a Regulation laying down harmonised rules on artificial intelligence’ (the “draft EU AI Act”) specifically caters for limiting the impacts of replicating discrimination which arises from human bias. This is done through Article 14 of the draft EU AI Act which requires human oversight over high-risk AI systems. Specifically, sub-article 4(b) of Article 14 says that human oversight involves (but is not limited to):

“Remain[ing] aware of the possible tendency of automatically relying or over-relying on the output produced by a high-risk AI system (‘automation bias’), in particular for high-risk AI systems used to provide information or recommendations for decisions to be taken by natural persons.”1

Recital 17 of the draft EU AI Act notes that AI systems used by public authorities for general social scoring of individuals may result in discriminatory outcomes and exclusion of certain groups. These systems have the potential to violate human rights such as non-discrimination. Recital 17 extrapolates that the social scores generated by such systems may lead to unfavourable treatment of individuals or whole groups in social settings that are unrelated to the context in which the data was originally generated or collected.

Furthermore, Article 15 of the draft EU AI Act emphasises that “the technical solutions to address AI-specific vulnerabilities shall include, where appropriate, measures to prevent and control attacks trying to manipulate the training dataset.”2 In other words, the EU legislator is creating a safeguard against the bias in the data which is used to train the AI system because if the input is biased, the AI output would exacerbate such bias.

The safeguards against human bias in the draft EU AI Act only apply for high-risk AI systems. Trading algorithms and various other uses of AI within asset management will mostly be classified as limited-risk AI systems. Consequently, the latter will only have transparency obligations under Article 52 of the draft EU AI Act when involving natural humans.

Directive 2014/91/EU of the European Parliament and of the Council of 23 July 2014 (“UCITS V”) amending Directive 2009/65/EC on the coordination of laws, regulations and administrative provisions relating to UCITS (“UCITS Directive”) which regulates UCITS funds contains more obligations in favour of investors than Directive 2011/61/EU of the European Parliament and of the Council of 8 June 2011 on Alternative Investment Fund Managers (“AIFMD”) which regulates Alternative Investment Funds (“AIFs”) and AIF Managers. The reason is that the latter two are non-retail and generally require less investor protection.

The general reporting requirements of UCITS funds are more vigorous in light of the low-risk retail-oriented financial product. Thus, one would expect that any use of AI in EU asset management would be more regulated in the UCTIS framework rather than the AIFMD. Yet, to date, none have any perspicuous laws which could be used to mitigate human-biased AI in their respective investment structures.

Article 52 of the UCITS Directive gives a diversification rule which can inadvertently limit mistakes from human bias at a maximum of 10% for each fund. Nevertheless, Article 52 UCITS focusses on limiting exposure from a single stock/bond rather than actually mitigating human bias in AI.

In a publication on the application of UCITS which was last revised in 2023, the European Securities and Markets Authority (“ESMA”) requires that “for each of the data items, firms should not artificially alter their practices in a way that would lead to the reporting being misleading.”3 ESMA’s UCITS requirement tends to be legislatively closer to the restrictions on human bias as envisaged in the draft EU AI Act as it focusses on data rather than the pecuniary profit margin of the investment. Nevertheless, the UCITS directive itself does not yet provide any obligations relating to AI; and neither does the AIFMD.

In as much as never-ending red trees do not exist in nature (and archaeologists had to note that Saflieni’s never-ending tree depiction was biased by humans), so do UCITS Management Companies and AIFMs need to retain full human oversight to refrain from allowing AI to create undesired results which are rooted in human bias. Nonetheless, any human oversight on AI systems in investment services is not currently being sourced from legal principles on AI but from the quintessential principle of protecting the investor.

Currently, the legislative situation is somewhat commensurable to the curtailed use of AI in asset management as the industry still retains arbitrary human oversight over AI. Be that as it may, if current laws are not amended, when AI’s use in asset management becomes more prevalent there will be various grey areas and lacunae which have not yet been addressed by the legislator to limit the risk of human bias arising from AI usage in EU investment funds.

Footnotes:

- European Commission, Proposal for a Regulation laying down harmonised rules on artificial intelligence, (2021) <https://digital-strategy.ec.europa.eu/en/library/proposal-regulation-laying-down-harmonised-rules-artificial-intelligence> Article 14(4)(b)

- , Article 15

- European Securities and Market Authority, Application of UCITS Directive (2023) < https://www.esma.europa.eu/sites/default/files/library/esma34_43_392_qa_on_application_of_the_ucits_directive.pdf > page 46.

Disclaimer: This document does not purport to give legal, financial or tax advice. Should you require further information or legal assistance, please do not hesitate to contact Dr. Mario Mizzi